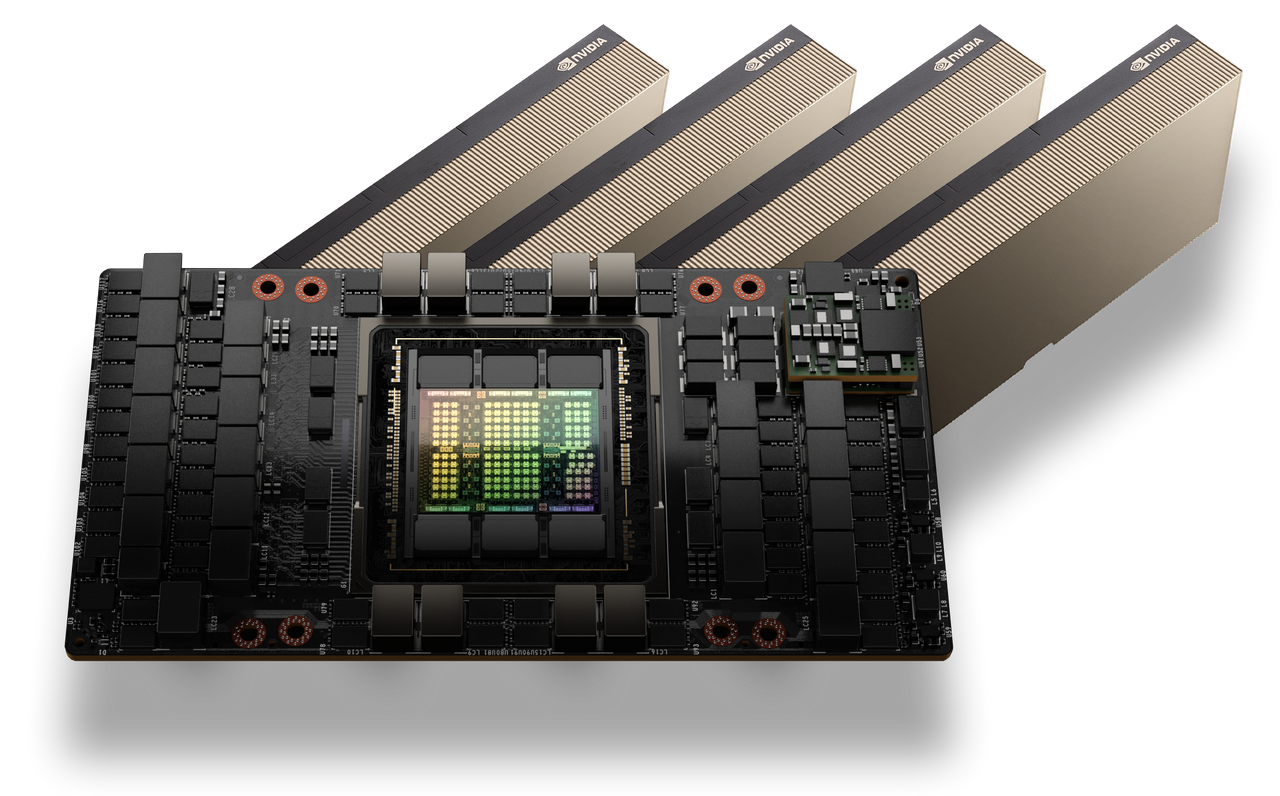

NVIDIA H100 GPUs: A Complete Buyer’s Guide for AI, HPC, and Data Centers

The NVIDIA H100 GPU, part of the Hopper architecture lineup, represents a cutting-edge solution for high-performance computing (HPC), artificial intelligence (AI), and deep learning tasks. Its advanced features make it a top choice for professionals seeking unparalleled computational power. This NVIDIA H100 GPU buying guide will help you navigate the purchasing process, compare models, and understand key factors to consider when buying an H100 GPU.

Key Specifications of the NVIDIA H100 GPU

Before diving into the buying process, it’s essential to understand the technical highlights of the H100:

- Architecture: Hopper, built on a 4nm process

- Memory: Up to 80 GB HBM3

- Performance: Over 60 TFLOPs of FP64 performance

- Connectivity: NVLink and PCIe Gen5 support

- Cooling Options: Air-cooled and liquid-cooled variants available

These specifications make the H100 ideal for intensive AI training, large-scale simulations, and other demanding workloads.

Choosing Between H100 Models: SXM vs. PCIe

The H100 comes in two primary configurations: SXM and PCIe. Selecting the right one depends on your use case and existing infrastructure.

SXM Model

- Advantages:

- Superior performance due to higher power envelopes (up to 700W)

- NVSwitch support for multi-GPU setups, enabling seamless scalability

- Industries and Workloads:

- Commonly used in AI research labs and data centers running massive neural network training

- Ideal for scientific simulations in fields like climate modeling and genomics

- Considerations:

- Requires specialized servers with NVLink support

- Higher initial investment

PCIe Model

- Advantages:

- Compatible with a broader range of systems

- Easier to integrate into existing setups

- Industries and Workloads:

- Often used in smaller enterprises or startups for inference workloads and moderate-scale machine learning projects

- Suitable for industries such as financial analytics and media rendering

- Considerations:

- Slightly lower performance compared to SXM (up to 350W TDP)

- Limited scalability compared to SXM

For buyers aiming for maximum performance and scalability, the SXM variant is the way to go. However, the PCIe model offers a reliable alternative if budget and compatibility are concerns.

Cooling Options: Air-Cooled vs. Liquid-Cooled

Cooling is a critical factor, especially when deploying GPUs in data centers.

- Air-Cooled Models:

- Easier to maintain and install

- Cost-effective for smaller setups

- Example Scenario: A startup with limited resources and moderate GPU usage might prefer air-cooled models for simplicity and lower initial costs.

- Liquid-Cooled Models:

- Enhanced cooling efficiency for dense configurations

- Reduced energy costs over time

- Ideal for environments with limited airflow

- Example Scenario: A data center running multiple H100 GPUs in a confined space could benefit from liquid cooling to avoid overheating and reduce energy expenses in the long term.

Cost Comparison:

- Air-cooled models generally have a lower upfront cost but may incur higher operational costs due to less efficient cooling.

- Liquid-cooled models require higher initial investment in infrastructure but can lead to savings through improved energy efficiency and performance consistency.

Selecting a cooling solution depends on your operational environment and power consumption goals. Liquid cooling is increasingly popular for its long-term efficiency, despite higher upfront costs.

Comparing H100 to A100: Is It Worth the Upgrade?

Many potential buyers wonder if upgrading from NVIDIA’s A100 to the H100 is worthwhile. Here’s a comparison:

| Feature | NVIDIA A100 | NVIDIA H100 |

| Architecture | Ampere | Hopper |

| Memory | 40/80 GB HBM2 | 80 GB HBM3 |

| Performance | 19.5 TFLOPs (FP64) | 60+ TFLOPs (FP64) |

| Connectivity | PCIe Gen4, NVLink | PCIe Gen5, NVLink |

| Applications | Versatile but less AI-optimized | Superior for AI, HPC, and large datasets |

Use Case Examples:

- A100:

- Suitable for general-purpose HPC tasks such as financial modeling, drug discovery, and virtual simulations where extreme AI performance is not the primary focus.

- Frequently deployed in hybrid systems for a mix of compute-heavy and memory-intensive workloads.

- H100:

- Ideal for cutting-edge AI research, such as training large language models or conducting real-time analytics on massive datasets.

- Perfect for industries like autonomous vehicle development, real-time fraud detection in finance, and personalized recommendation engines in e-commerce.

While the A100 remains a capable choice for general HPC tasks, the H100 significantly outperforms it in AI and data-intensive applications, justifying the upgrade for users with demanding workloads.

Factors to Consider Before Purchasing an H100 GPU

1. Budget

The H100 is a premium product, with prices varying based on configuration and vendor. Define your budget and explore financing options if needed.

2. Compatibility

Ensure your existing hardware can support the H100. For SXM variants, a compatible server with NVLink is essential.

3. Use Case

Match the GPU’s capabilities to your workload. For AI and deep learning tasks, the H100 offers unmatched performance.

4. Vendor Reliability

Purchase from reputable vendors to avoid counterfeit products. Authorized resellers and trusted marketplaces should be prioritized.

5. Future Scalability

Plan for future expansions. If you anticipate scaling your operations, opt for configurations with higher connectivity and cooling efficiency.

Recommended Vendors and Pricing Insights

While pricing can vary, here are some tips for finding the best deals:

- Authorized Dealers: Look for NVIDIA-certified resellers.

- Bulk Discounts: For large deployments, negotiate with vendors.

- Warranty and Support: Ensure your purchase includes robust after-sales support.

FAQ: Frequently Asked Questions About NVIDIA H100 GPUs

1. What is the price range of the NVIDIA H100 GPU?

Prices vary significantly depending on the configuration and vendor. Typically, H100 GPUs start at several thousand dollars for PCIe variants and go higher for SXM models with advanced cooling options.

2. Can I use the H100 GPU for gaming?

While technically possible, the H100 is designed for high-performance computing and AI tasks, making it overkill and less optimized for gaming. Gamers should consider NVIDIA’s GeForce RTX series instead.

3. What kind of server setup is required for the SXM model?

The SXM model requires specialized servers equipped with NVLink and compatible with the higher power requirements of the H100.

4. Is the H100 worth upgrading if I already have an A100?

If your workload includes cutting-edge AI or real-time data analytics, the H100’s superior performance can justify the upgrade. For general HPC tasks, the A100 may still suffice.

5. What cooling option should I choose for my H100 GPU?

- Air-Cooled: Best for smaller setups with adequate airflow.

- Liquid-Cooled: Ideal for dense configurations or environments with limited airflow, offering long-term energy savings.

6. Where can I buy NVIDIA H100 GPUs?

Purchase from NVIDIA-certified resellers, reputable online marketplaces, or directly through NVIDIA’s enterprise channels to ensure authenticity and support.

Conclusion

The NVIDIA H100 GPU is a game-changer for HPC, AI, and deep learning. By understanding the differences between models, cooling options, and use cases, you can make an informed purchasing decision. Ensure compatibility with your infrastructure, prioritize scalability, and choose a reliable vendor to maximize your investment.

Looking for tailored solutions to meet your business needs? At Saharatech, we specialize in providing high-quality products and expert consultancy services to help you achieve your goals. Whether you need assistance in sourcing the right products or strategic guidance, we’re here to help.

📞 Contact us today to discuss how we can support your business. Let’s grow together!